This is my first time posting on this forum, I apologise in advance if I inadvertently make any mistakes and welcome any suggestions for question improvements. I am running Ubuntu 22.04.1 on a laptop with the following important specifications:

RAM: 16GB

Storage: 1TB 5400RPM 2.5 inch HDD

I have an internet connection with a download speed of 150Mbps, and I have observed that the download speed for large files degrades after the disk cache has been filled, indicating that the hard disk is a bottleneck during large downloads. My combined RAM and Cache usage is usually around 11GB, leaving 5GB of unused RAM.

Question:

It it possible to utilize the unused RAM to cache downloads? I would prefer if the solution worked for downloads from all processes, not just manual downloads from wget or curl.

Potential solution:

Create a tmpfs filesystem as mentioned here to use as a RAMdisk and use a combination of curl and pv based on this and this to simultaneously download the file to RAM and copy to the hard disk at a sustainable speed by limiting the transfer rate and maintaining a buffer.

I'm not sure how feasible this solution is, and I would highly appreciate some advice on better solutions. I was wondering if a method exists for caching downloads using the /tmp directory mounted as a tmpfs, but I can't think of a proper method of controlling how processes utilize the /tmp directory. I will be switching to a SATA SSD in the future, but I think this is an important problem for many users with fast internet connections and slow disks.

Thank you in advance for your responses.

Edit: Based on my understanding of memory management in Linux, even if the temporary file system size is larger than the available free space, cached applications would be deleted to make free space. In my case, processes tend to use only 4-5GB of RAM, leaving a lot of potential space for downloads.

Edit 2: I primarily need advice on automatically setting up a simultaneous download and transfer of files from /tmp to a final destination and also how to deal with files larger than the free space in RAM

Edit 3: As long as pv is guaranteed to buffer to RAM, the following command should do the trick

curl "url" | pv -B buffer_size -L rate_limit > "outputfilepath"

Thanks to @cas for his help.

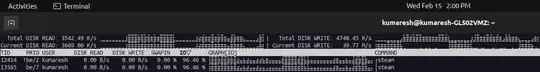

Edit 4: As requested, I have shared a screenshot of iotop (Unfortunately I couldn't copy the text or log it properly, so I cropped the screenshot to a size of 40KB). I was downloading a game on steam, and the IO wait percentage frequently spiked to 80-100% and write speeds were inconsistent, falling below download speed on many occasions. Since my OS is also installed on the same HDD, these IO wait spikes make the system highly unresponsive. The actual disk writes show up for the mount.ntfs process since my game library is stored in an NTFS partition, so they're not visible in the screenshot. The disk is also fully defragmented.