I have NFS working much better now in RHEL 7.9, on a wired 1gbps LAN. The mount shows up as vers=4.1. Initially I was using sync and getting a reliable and consistent ~55 MB/sec for a data_70gb.tar file using rsync -P.

Since changing sync to async I will get as high as 270 MB/sec for 1-2 minutes, and that will be the average of the total copy for small gigabyte files. But with my 70gb tar file that initially is supposed to take around 4.5 minutes at ~ 250 MB/sec, in the end rsync shows the copy happened at an average 160 MB/sec, it took about 7 minutes total, and I noticed periodically about 5 times the copy speed would drop to around 20 MB/sec for not much more than a minute then ramp back up to around 250 MB/sec.

why would this happen? I am using proto=tcp; also the servers have 192gb RAM, and use 12gbps SAS SSD's; the tar file was newly created just before doing rsync so I believe disk caching in linux should be in effect and disk I/O should not be a factor (but correct me if I'm wrong here).

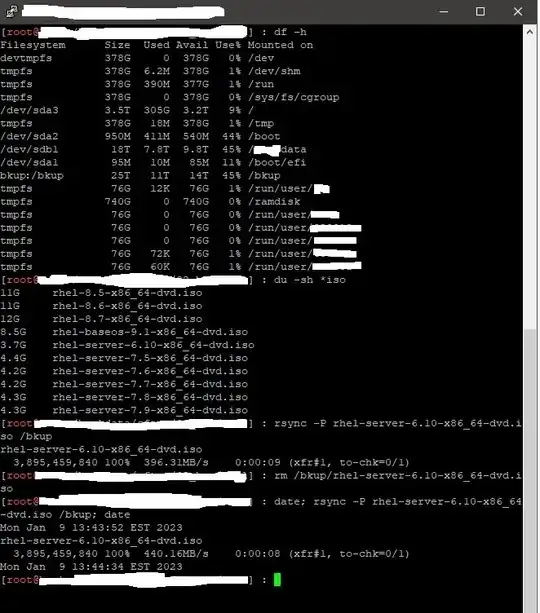

pic of ssh window showing rsync :