I have a QNAP HS-251+ NAS with two 3TB WD Red NAS Hard Drives in RAID1 configuration. I'm quite acquainted with Linux and CLI tools, but never used mdadm or LVM so, despite knowing (now) that QNAP uses them, I have no expertise, or even knowledge about how QNAP manages to create the RAID1 assemble. Some weeks ago, during a firmware update, the NAS stopped booting. I'm on my way to solve that problem, but I would like to recover my data before attempting to plug my disks again in the NAS (QNAP HelpDesk has been basically useless).

I expected to be able to mount the partition easily simply plugin one of the disks on my laptop (Kubuntu), but that was when I discovered that things were more complex than that.

This is the relevant information I managed to extract:

xabi@XV-XPS15:~$ sudo dmesg

[...]

[17272.730964] usb 3-1: new high-speed USB device number 3 using xhci_hcd

[17272.884120] usb 3-1: New USB device found, idVendor=059b, idProduct=0475, bcdDevice= 0.00

[17272.884138] usb 3-1: New USB device strings: Mfr=1, Product=2, SerialNumber=5

[17272.884144] usb 3-1: Product: USB to ATA/ATAPI Bridge

[17272.884149] usb 3-1: Manufacturer: JMicron

[17272.884153] usb 3-1: SerialNumber: DCC4108FFFFF

[17272.891498] usb-storage 3-1:1.0: USB Mass Storage device detected

[17272.892117] scsi host6: usb-storage 3-1:1.0

[17273.907765] scsi 6:0:0:0: Direct-Access WDC WD30 EFRX-68EUZN0 PQ: 0 ANSI: 2 CCS

[17273.908085] sd 6:0:0:0: Attached scsi generic sg2 type 0

[17273.908261] sd 6:0:0:0: [sdc] 1565565872 512-byte logical blocks: (802 GB/747 GiB)

[17273.909041] sd 6:0:0:0: [sdc] Write Protect is off

[17273.909046] sd 6:0:0:0: [sdc] Mode Sense: 34 00 00 00

[17273.909789] sd 6:0:0:0: [sdc] Write cache: disabled, read cache: enabled, doesn't support DPO or FUA

[17273.976961] sd 6:0:0:0: [sdc] Attached SCSI disk

xabi@XV-XPS15:~$

xabi@XV-XPS15:~$ sudo fdisk -l /dev/sdc

Disk /dev/sdc: 746,52 GiB, 801569726464 bytes, 1565565872 sectors

Disk model: EFRX-68EUZN0

Units: sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disklabel type: dos

Disk identifier: 0x00000000

Dispositivo Boot Start End Sectors Size Id Tipo

/dev/sdc1 1 4294967295 4294967295 2T ee GPT

xabi@XV-XPS15:~$ sudo mount /dev/sdc1 /mnt/

mount: /mnt: special device /dev/sdc1 does not exist.

xabi@XV-XPS15:~$

xabi@XV-XPS15:~$ sudo parted -l

[...]

Error: Invalid argument during seek for read on /dev/sdc

Retry/Ignore/Cancel? i

Error: The backup GPT table is corrupt, but the primary appears OK, so that will be used.

OK/Cancel? ok

Model: WDC WD30 EFRX-68EUZN0 (scsi)

Disk /dev/sdc: 802GB

Sector size (logical/physical): 512B/512B

Partition Table: unknown

Disk Flags:

xabi@XV-XPS15:~$

xabi@XV-XPS15:~$ sudo lsblk

[...]

sdc 8:32 0 746,5G 0 disk

xabi@XV-XPS15:~$

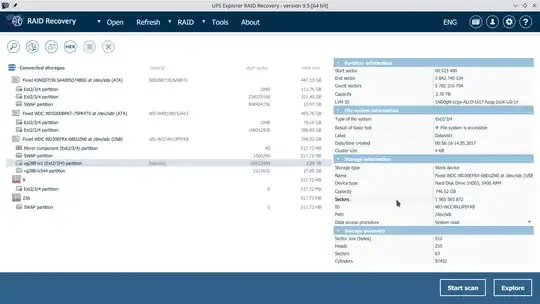

Using the free version of a commercial tool (UFS RAID Recovery) I've obtained advanced information about the partitions on the disk:

and even recovered the lvm backup config

# Generated by LVM2 version 2.02.138(2)-git (2015-12-14): Fri Feb 11 09:43:53 2022

contents = "Text Format Volume Group"

version = 1

description = "Created *after* executing '/sbin/vgchange vg288 --addtag cacheVersion:3'"

creation_host = "XV-NAS" # Linux XV-NAS 5.10.60-qnap #1 SMP Tue Dec 21 10:57:31 CST 2021 x86_64

creation_time = 1644569033 # Fri Feb 11 09:43:53 2022

vg288 {

id = "g0F3zh-N3aN-1vEQ-qYU4-6Jhv-xgLC-d4hU43"

seqno = 199

format = "lvm2" # informational

status = ["RESIZEABLE", "READ", "WRITE"]

flags = []

tags = ["PV:DRBD", "PoolType:Static", "StaticPoolRev:2", "cacheVersion:3"]

extent_size = 8192 # 4 Megabytes

max_lv = 0

max_pv = 0

metadata_copies = 0

physical_volumes {

pv0 {

id = "ZcFzMw-ZgzA-fOr0-FGgI-E3hc-uwKu-GzWSKn"

device = "/dev/drbd1" # Hint only

status = ["ALLOCATABLE"]

flags = []

dev_size = 5840621112 # 2.71975 Terabytes

pe_start = 2048

pe_count = 712966 # 2.71975 Terabytes

}

}

logical_volumes {

lv544 {

id = "NRzjb8-Dgw4-oHSj-rjtF-2e8H-KQZ2-YPRre3"

status = ["READ", "WRITE", "VISIBLE"]

flags = []

creation_host = "NAS0D80FB"

creation_time = 1494716166 # 2017-05-14 00:56:06 +0200

read_ahead = 8192

segment_count = 1

segment1 {

start_extent = 0

extent_count = 7129 # 27.8477 Gigabytes

type = "striped"

stripe_count = 1 # linear

stripes = [

"pv0", 0

]

}

}

lv1 {

id = "1ND0gN-Lcgx-ALcO-Ui17-hzzg-2soX-LGr1rl"

status = ["READ", "WRITE", "VISIBLE"]

flags = []

creation_host = "NAS0D80FB"

creation_time = 1494716174 # 2017-05-14 00:56:14 +0200

read_ahead = 8192

segment_count = 1

segment1 {

start_extent = 0

extent_count = 705837 # 2.69255 Terabytes

type = "striped"

stripe_count = 1 # linear

stripes = [

"pv0", 7129

]

}

}

}

}

Despite that, I haven't been able to mount the relevant volume (vg288-lv1). As I said, I'm not familiar with LVM, there are some inconsistencies with what I've seen in other posts (no sdc1 device, parted can't find the partitions, the reported size doesn't match the real one...) and I'm not sure how to start to solve this methodically.

I've tried using the backup config as a template to restore the lvm volumes and failed, but, as I said, I'm not sure that I'm doing things correctly, so I feel I need some guiding on where to start, as the results of my tests until now will only add confusion. Thanks.

UPDATE:

As @user7138814 suggested, it seems that the WD30EFRX is an "Advanced Format" disk, meaning 4k (physical) sectors, and some SATA-USB adapters don't support this. Mine was old, so I got a new one, and all the weird inconsistences dissapeared:

# dmesg

[...]

[74820.751587] usb 4-1: new SuperSpeed USB device number 4 using xhci_hcd

[74820.779219] usb 4-1: New USB device found, idVendor=174c, idProduct=1153, bcdDevice= 1.00

[74820.779235] usb 4-1: New USB device strings: Mfr=2, Product=3, SerialNumber=1

[74820.779241] usb 4-1: Product: Ugreen Storage Device

[74820.779245] usb 4-1: Manufacturer: Ugreen

[74820.779249] usb 4-1: SerialNumber: 26A1EE833D7E

[74820.787123] scsi host7: uas

[74820.788495] scsi 7:0:0:0: Direct-Access WDC WD30 EFRX-68EUZN0 0 PQ: 0 ANSI: 6

[74820.789417] sd 7:0:0:0: Attached scsi generic sg2 type 0

[74820.789908] sd 7:0:0:0: [sdd] 5860533168 512-byte logical blocks: (3.00 TB/2.73 TiB)

[74820.789918] sd 7:0:0:0: [sdd] 4096-byte physical blocks

[74820.790061] sd 7:0:0:0: [sdd] Write Protect is off

[74820.790067] sd 7:0:0:0: [sdd] Mode Sense: 43 00 00 00

[74820.790252] sd 7:0:0:0: [sdd] Write cache: enabled, read cache: enabled, doesn't support DPO or FUA

[74820.790688] sd 7:0:0:0: [sdd] Optimal transfer size 33553920 bytes not a multiple of physical block size (4096 bytes)

[74820.849288] sdd: sdd1 sdd2 sdd3 sdd4 sdd5

[74820.891810] sd 7:0:0:0: [sdd] Attached SCSI disk

# fdisk -l /dev/sdd

Disk /dev/sdd: 2.73 TiB, 3000592982016 bytes, 5860533168 sectors

Disk model: EFRX-68EUZN0

Units: sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 4096 bytes

I/O size (minimum/optimal): 4096 bytes / 4096 bytes

Disklabel type: gpt

Disk identifier: EA97E765-8FD0-4C41-8AEA-8B7570F42D68

Device Start End Sectors Size Type

/dev/sdd1 40 1060289 1060250 517.7M Microsoft basic data

/dev/sdd2 1060296 2120579 1060284 517.7M Microsoft basic data

/dev/sdd3 2120584 5842744109 5840623526 2.7T Microsoft basic data

/dev/sdd4 5842744112 5843804399 1060288 517.7M Microsoft basic data

/dev/sdd5 5843804408 5860511999 16707592 8G Microsoft basic data

# lsblk

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT

[...]

sdd 8:48 0 2.7T 0 disk

|-sdd1 8:49 0 517.7M 0 part

|-sdd2 8:50 0 517.7M 0 part

|-sdd3 8:51 0 2.7T 0 part

|-sdd4 8:52 0 517.7M 0 part

`-sdd5 8:53 0 8G 0 part

SOLUTION

Following loosely the instructions on https://forum.qnap.com/viewtopic.php?t=156819 , I managed to mount the partition and recover the data. This are the steps I followed:

Force mdadm to examine the disk:

# mdadm --examine --scan

ARRAY /dev/md/9 metadata=1.0 UUID=2b29da4f:f725eb04:e2ac3e60:37023bbd name=9

ARRAY /dev/md/256 metadata=1.0 UUID=ed18a26a:4e9ca5f1:aca74d2f:99cb409b name=256

ARRAY /dev/md/1 metadata=1.0 UUID=111bee9e:d34c75c0:87822b63:fbcd271d name=1

ARRAY /dev/md/13 metadata=1.0 UUID=d410ebe5:a4158d63:08559db0:795abb11 name=13

ARRAY /dev/md/322 metadata=1.0 UUID=4bd0cd6d:fcc98341:329fab8c:a32f5495 name=322

Check that the raid array was recgnized:

# lsblk

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT

[...]

sdd 8:48 0 2.7T 0 disk

|-sdd1 8:49 0 517.7M 0 part

|-sdd2 8:50 0 517.7M 0 part

|-sdd3 8:51 0 2.7T 0 part

| `-md127 9:127 0 2.7T 0 raid1

|-sdd4 8:52 0 517.7M 0 part

`-sdd5 8:53 0 8G 0 part

# cat /proc/mdstat

Personalities : [linear] [multipath] [raid0] [raid1] [raid6] [raid5] [raid4] [raid10]

md127 : active (auto-read-only) raid1 sdd3[1]

2920311616 blocks super 1.0 [2/1] [_U]

md123 : inactive sdc1[1]

530108 blocks super 1.0

md124 : inactive sdc5[1]

8353780 blocks super 1.0

md125 : inactive sdc2[1]

530124 blocks super 1.0

md126 : inactive sdc4[1]

530128 blocks super 1.0

unused devices: <none>

Identify the Physical volumes. Ignore warning:

# pvdisplay

WARNING: PV /dev/md127 in VG vg288 is using an old PV header, modify the VG to update.

--- Physical volume ---

PV Name /dev/md127

VG Name vg288

PV Size <2.72 TiB / not usable <1.78 MiB

Allocatable yes (but full)

PE Size 4.00 MiB

Total PE 712966

Free PE 0

Allocated PE 712966

PV UUID ZcFzMw-ZgzA-fOr0-FGgI-E3hc-uwKu-GzWSKn

Identify volume group:

# vgdisplay vg288

WARNING: PV /dev/md127 in VG vg288 is using an old PV header, modify the VG to update.

--- Volume group ---

VG Name vg288

System ID

Format lvm2

Metadata Areas 1

Metadata Sequence No 199

VG Access read/write

VG Status resizable

MAX LV 0

Cur LV 2

Open LV 0

Max PV 0

Cur PV 1

Act PV 1

VG Size <2.72 TiB

PE Size 4.00 MiB

Total PE 712966

Alloc PE / Size 712966 / <2.72 TiB

Free PE / Size 0 / 0

VG UUID g0F3zh-N3aN-1vEQ-qYU4-6Jhv-xgLC-d4hU43

Identify the logical volumes:

# lvdisplay

WARNING: PV /dev/md127 in VG vg288 is using an old PV header, modify the VG to update.

--- Logical volume ---

LV Path /dev/vg288/lv544

LV Name lv544

VG Name vg288

LV UUID NRzjb8-Dgw4-oHSj-rjtF-2e8H-KQZ2-YPRre3

LV Write Access read/write

LV Creation host, time NAS0D80FB, 2017-05-14 00:56:06 +0200

LV Status NOT available

LV Size <27.85 GiB

Current LE 7129

Segments 1

Allocation inherit

Read ahead sectors 8192

--- Logical volume ---

LV Path /dev/vg288/lv1

LV Name lv1

VG Name vg288

LV UUID 1ND0gN-Lcgx-ALcO-Ui17-hzzg-2soX-LGr1rl

LV Write Access read/write

LV Creation host, time NAS0D80FB, 2017-05-14 00:56:14 +0200

LV Status NOT available

LV Size 2.69 TiB

Current LE 705837

Segments 1

Allocation inherit

Read ahead sectors 8192

Activate the required volume:

# lvchange -ay /dev/vg288/lv1

WARNING: PV /dev/md127 in VG vg288 is using an old PV header, modify the VG to update.

Mount and check that its accesible:

# mount -t ext4 -o ro /dev/vg288/lv1 /mnt

# ls /mnt

Container Multimedia Public Web core-hal_callhome_co core-hal_lvm_check hal_daemon_seg_fault.log lost+found

Download Musica Samples aquota.user core-hal_daemon core-hal_netlink homes qsync-homes

Imaxes Pelis Series aquota.user.new core-hal_enc_reset hal_daemon_fail.log htdocs