After rebooting my server i get the following error message:

Begin: Running /scripts/init-premount … done.

Begin: Mounting root file system …

Begin: Running /scripts/local-top …

Volume group “ubuntu-vg” not found

Cannot process volume group ubuntu-vg

Begin: Running /scripts/local-premount …

...

Begin: Waiting for root file system …

Begin: Running /scripts/local-block …

mdadm: No arrays found in config file or automatically

Volume group “ubuntu-vg” not found

Cannot process volume group ubuntu vg

mdadm: No arrays found in config file or automatically # <-- approximately 30 times

mdadm: error opening /dev/md?*: No such file or directory

done.

Gave up waiting for root file system device.

Common problems:

- Boot args (cat /proc/cmdline)

- Check rootdelay= (did the system wait long enough?)

- Missing modules (cat /proc/modules: ls /dev)

ALERT! /dev/mapper/ubuntu--vg-ubuntu--lv does not exist. Dropping to a shell!

The system drops to initramfs shell (busybox) where lvm vgscan doesn't find any volume groups and ls /dev/mapper only shows only one entry control.

When i boot the live SystemRescueCD, the Volume Group can be found and the LV is available as usual in /dev/mapper/ubuntu--vg-ubuntu--lv. I am able to mount it and the VG is set to active. So the VG and the LV look fine but something seems broken during the boot process.

Ubuntu 20.04 Server, LVM setup on top of hardware raid1+0 with 4 SSDs. The hardware RAID controller is HPE Smart Array P408i-p SR Gen10 controller with firmware version 3.00. Four HPE SSDs model MK001920GWXFK in a RAID 1+0 configuration. The server model is HPE Proliant DL380 Gen10.

No software raid, no encryption.

Any hints how to find the error and fix the problem?

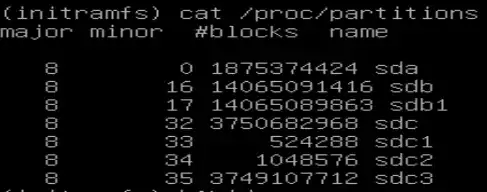

EDIT I:

Where

- /dev/sdc1 is /boot/efi

- /dev/sdc2 is /boot

- /dev/sdc3 is the PV

Booting from an older kernel version worked once until executing apt update && apt upgrade. After the upgrade the older kernel had the same issue.

EDIT II:

In the module /proc/modules I can find the following entry:

smartpqi 81920 0 - Live 0xffffffffc0626000

No output for lvm pvs in initramfs shell.

Output for lvm pvchange -ay -v

No volume groups found.

Output for lvm pvchange -ay --partial vg-ubuntu -v

PARTIAL MODE. Incomplete logical volumes will be processed.

VG name on command line not found in list of VGs: vg-ubuntu

Volume group "vg-ubuntu" not found

Cannot process volume group vg-ubuntu

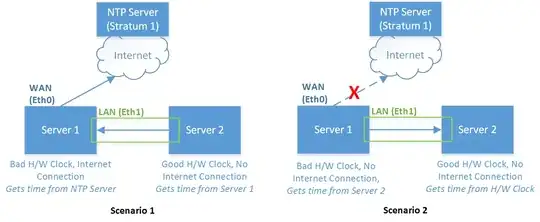

There is a second RAID controller with HDDs connected to another PCI slot; same model P408i-p SR Gen10. There is a volume group named "cinder-volumes" configured on top of this RAID. But this VG can't be found either in initramfs.

EDIT III:

Here is a link to the requested files from the root FS:

- /mnt/var/log/apt/term.log

- /mnt/etc/initramfs-tools/initramfs.conf

- /mnt/etc/initramfs-tools/update-initramfs.conf

EDIT IV:

In the SystemRescueCD I mounted the LV / (root), /boot and /boot/efi and chrooted into the LV /. All the mounted volumes have enough disk space left (disk space used < 32%).

The output of update-initramfs -u -k 5.4.0.88-generic is:

update-initramfs: Generating /boot/initrd.img-5.4.0.88-generic

W: mkconf: MD subsystem is not loaded, thus I cannot scan for arrays.

W: mdadm: failed to auto-generate temporary mdadm.conf file

The image /boot/initrd.img-5.4.0-88-generic has an updated last modified date.

Problem remains after rebooting.

The boot initrd parameter in the grub menu config /boot/grub/grub.cfg points to /initrd.img-5.4.0-XX-generic, where XX is different for each menu entry, i.e. 88, 86 and 77.

In the /boot directory I can find different images (?)

vmlinuz-5.4.0-88-generic

vmlinuz-5.4.0-86-generic

vmlinuz-5.4.0-77-generic

The link /boot/initrd.img points to the latest version /boot/initrd.img-5.4.0-88-generic.

EDIT V:

Since no measure has led to the desired result and the effort to save the system is too great, I had to completely rebuild the server.