I had a Linux NAS that I have been using for a few years. Originally I created the MDADM-Array on a Debian OS, using the command-line interface.

And at the time the OS was installed on 16GB Sandisk-USB3 and the drive was set up as RAID: LEVEL-6, with 6 identical Brand-New WD-Red 3TB Disks. Afterward, I upgrade the OS Drive with a Transcend 120GB NVMe SSD, and the OS was replaced with OpenMediaVault(OMV). And it's been running fine for over a year now. However, the NVMe SSD failed recently, and it's not writable anymore.

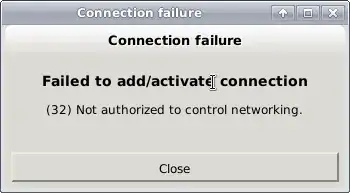

So, I upgraded it with a Samsung NVMe and installed OMV from scratch, however it detected the array as clean but degraded (5 disks, 1 was not added to the array), while trying to solve this by mistake I DELETED the MDADM: ARRAY from OpenMediaVault UI And now it seems the Superblock of all 5 disks are wiped clean. :-(

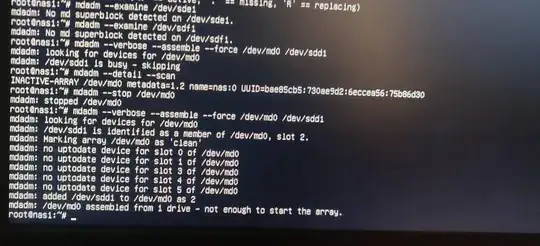

I had taken multiple backups of the MDADM.conf file, however now I am not sure where I have stored it. The screenshots attached show the 6th disk's superblock, which was not wiped because it was not detected by OMV: MDADM. It seems to contain the details of the original ARRAY.

While it mostly contains Linux ISOs, music, and Movies; I have some invaluable data on those hard disks, like family photos, etc. Is there any way I can re-mount the ARRAY without losing the data?

I can confirm that the disks were all in healthy condition. and I have not formatted them after creating the array. But, while I was dust-cleaning the system, I might have changed the order of disks in the SATA connections. Also I do not remember if I created the array with /dev/sda1 /dev/sdb1 /dev/sdc1 /dev/sdd1 /dev/sde1 /dev/sdf1 or /dev/sda /dev/sdb /dev/sdc /dev/sdd /dev/sde /dev/sdf?

I had read somewhere that, as long as I have not manually formatted those disks I can recreate the disks (with --assume_clean) and I can mount and use and mount it like before. Is that true? Should I try this command?

mdadm --zero /dev/sda1 /dev/sdb1 /dev/sdc1 /dev/sdd1 /dev/sde1 /dev/sdf1

mdadm -v --create /dev/md0 --assume-clean --level=raid6 --chunk 256 --raid-devices=6 /dev/sda1 /dev/sdb1 /dev/sdc1 /dev/sdd1 /dev/sde1 /dev/sdf1

Is this safe and non-destructive?