I have this Proxmox (PVE) installation on an old server and I wanted to swap the old drives in order to get more space. But first I wanted to move the PVE installation to an SSD. Simple enough.

Starting Point:

- 1x76GB SATA (PVE Root LVM)

- 2x500GB SATA (VG1 configured as RAID1 with mdadm - LVM Thinpool)

End Goal:

- 1x120GB SSD (PVE Root LVM)

- 2x500GB SATA (VG1 for a while longer)

- 1x2000GB SATA* (in VG1 in order to

pvmoveall files to this drive from the old RAID)

*This drive is to be configured in an mdraid setup with another 2TB drive which is to be added at a later time (due to lack of cables and SATA ports).

I inserted the SSD and booted up a live USB with Linux, ran a dd command I found online to copy the old 76GB drive to the new (but used) 120GB SSD. This worked out ok, apart from the disk still showing 76GB in size.

So to fix this I'm not exactly sure what I did in hindsight. Looking at the history I believe I ran the following commands

echo 1 > /sys/class/scsi_device/0\:0\:0\:0/device/rescan

parted -l

# At this point I got a few questions and I chose Fix and/or Ignore until it was finished

pvresize /dev/sda3

lvresize /dev/pve/data -l 100%FREE

Now I thought I was done and started working with my next item on the list. This is where I met my late night brain Stu Pid.

I created the RAID with the one drive

mdadm --create /dev/md2 --level 1 --raid-devices 2 /dev/sdd missing

# Next, I forgot to RTFM..

mkfs.ext4 /dev/md2

# I actually aborted the above command Ctrl-C...

# Uhhhh. I create the Physical Volume and extended the VG

pvcreate /dev/md2

vgextend vg1 /dev/md2

# Next, I dunno

lvextend /dev/vg1/tpool /dev/md2

resize2fs /dev/mapper/vg1-tpool

# Again what? And now something from Youtube

/usr/share/mdadm/mkconf > /etc/mdadm/mdadm.conf

dpkg-reconfigure pve-kernel-`uname -r`

From this point I, for some reason, ran parted -l again and resize2fs on the vg1-tpool. All I know is that I don't know what I'm doing at this point.

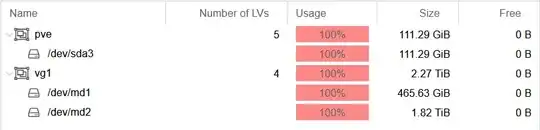

So to sum up, this is what I end up with (some info omitted for brevity):

root@host:~# lsblk

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT

sda 8:0 0 111.8G 0 disk

├─sda1 8:1 0 1007K 0 part

├─sda2 8:2 0 512M 0 part

└─sda3 8:3 0 111.3G 0 part

├─pve-swap 253:0 0 8G 0 lvm [SWAP]

├─pve-root 253:1 0 18.5G 0 lvm /

├─pve-data_tmeta 253:2 0 1G 0 lvm

│ └─pve-data-tpool 253:4 0 82.8G 0 lvm

│ ├─pve-data 253:5 0 82.8G 0 lvm

│ ├─pve-vm--102--disk--0 253:6 0 15G 0 lvm

│ └─pve-vm--103--disk--0 253:7 0 4G 0 lvm

└─pve-data_tdata 253:3 0 82.8G 0 lvm

└─pve-data-tpool 253:4 0 82.8G 0 lvm

├─pve-data 253:5 0 82.8G 0 lvm

├─pve-vm--102--disk--0 253:6 0 15G 0 lvm

└─pve-vm--103--disk--0 253:7 0 4G 0 lvm

sdb 8:16 0 465.8G 0 disk

└─sdb1 8:17 0 465.8G 0 part

└─md1 9:1 0 465.7G 0 raid1

├─vg1-tpool_tmeta 253:8 0 108M 0 lvm

│ └─vg1-tpool-tpool 253:10 0 2.3T 0 lvm

│ ├─vg1-tpool 253:11 0 2.3T 0 lvm

│ ├─vg1-vm--100--disk--0 253:12 0 32G 0 lvm

│ ├─vg1-vm--100--disk--1 253:13 0 350G 0 lvm

│ └─vg1-vm--101--disk--0 253:14 0 32G 0 lvm

└─vg1-tpool_tdata 253:9 0 2.3T 0 lvm

└─vg1-tpool-tpool 253:10 0 2.3T 0 lvm

├─vg1-tpool 253:11 0 2.3T 0 lvm

├─vg1-vm--100--disk--0 253:12 0 32G 0 lvm

├─vg1-vm--100--disk--1 253:13 0 350G 0 lvm

└─vg1-vm--101--disk--0 253:14 0 32G 0 lvm

sdc 8:32 0 465.8G 0 disk

└─sdc1 8:33 0 465.8G 0 part

└─md1 9:1 0 465.7G 0 raid1

├─vg1-tpool_tmeta 253:8 0 108M 0 lvm

│ └─vg1-tpool-tpool 253:10 0 2.3T 0 lvm

│ ├─vg1-tpool 253:11 0 2.3T 0 lvm

│ ├─vg1-vm--100--disk--0 253:12 0 32G 0 lvm

│ ├─vg1-vm--100--disk--1 253:13 0 350G 0 lvm

│ └─vg1-vm--101--disk--0 253:14 0 32G 0 lvm

└─vg1-tpool_tdata 253:9 0 2.3T 0 lvm

└─vg1-tpool-tpool 253:10 0 2.3T 0 lvm

├─vg1-tpool 253:11 0 2.3T 0 lvm

├─vg1-vm--100--disk--0 253:12 0 32G 0 lvm

├─vg1-vm--100--disk--1 253:13 0 350G 0 lvm

└─vg1-vm--101--disk--0 253:14 0 32G 0 lvm

sdd 8:48 0 1.8T 0 disk

└─md2 9:2 0 1.8T 0 raid1

└─vg1-tpool_tdata 253:9 0 2.3T 0 lvm

└─vg1-tpool-tpool 253:10 0 2.3T 0 lvm

├─vg1-tpool 253:11 0 2.3T 0 lvm

├─vg1-vm--100--disk--0 253:12 0 32G 0 lvm

├─vg1-vm--100--disk--1 253:13 0 350G 0 lvm

└─vg1-vm--101--disk--0 253:14 0 32G 0 lvm

root@host:~# vgdisplay

--- Volume group ---

VG Name pve

VG Access read/write

VG Status resizable

VG Size <111.29 GiB

PE Size 4.00 MiB

Total PE 28489

Alloc PE / Size 28489 / <111.29 GiB

Free PE / Size 0 / 0

--- Volume group ---

VG Name vg1

VG Access read/write

VG Status resizable

VG Size 2.27 TiB

PE Size 4.00 MiB

Total PE 596101

Alloc PE / Size 596101 / 2.27 TiB

Free PE / Size 0 / 0

root@host:~# lvdisplay vg1

--- Logical volume ---

LV Name tpool

VG Name vg1

LV Write Access read/write

LV Creation host, time host, 2020-09-11 00:05:40 +0200

LV Pool metadata tpool_tmeta

LV Pool data tpool_tdata

LV Status available

# open 4

LV Size 2.27 TiB

Allocated pool data 15.16%

Allocated metadata 50.58%

Current LE 596047

Segments 1

Allocation inherit

Read ahead sectors auto

- currently set to 256

Block device 253:10

What are the actual mistakes and how could I fix them? It seems like I've somehow allocated all the extents on every VG, So now I can't really do anything! Again, Is it still possible to:

- Get the "free space" back, whatever that means

- Fix /dev/md2 so that I can

pvmovefrom /dev/md1 properly