- Why does a virtual bridge need an IP address when a physical one does not?

It is a misconception that a virtual bridge needs an IP address. It does not need it.

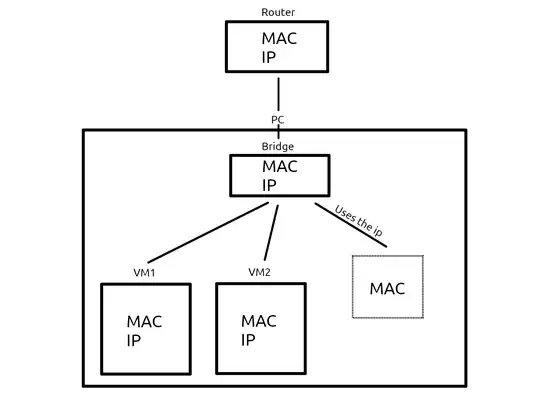

You actually can have a virtual bridge without an IP address. But then the host itself won't be reachable over IP on that physical interface at all: only the VMs will.

In a enterprise virtualization host, this can be useful: you might have a customer network that needs to be connected to customers' VMs. You might not want to grant access from such a network to the virtualization host itself, but only to the respective VMs. Then you'd have another, physically separate, management network that you would use to administer your virtualization hosts. This network would be connected to the host through a separate NIC that would not be a member of any virtual bridge.

- How does the physical NIC know to use the virtual bridge's IP address when the NIC doesn't have one?

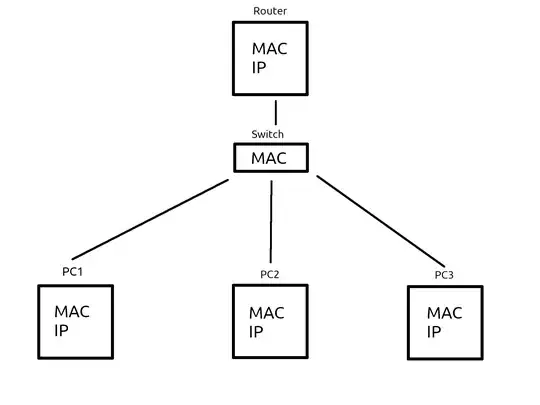

Unless the physical NIC has specific IPv4 acceleration features, an IP address is just payload data for the NIC. A basic physical NIC works with Layer-2 only, i.e. with MAC addresses. IP protocol is Layer-3, and that is usually left for the operating system's network driver stack. When you "configure an IP address to a NIC" with ifconfig or ip addr, you don't necessarily make any changes to the actual physical hardware configuration, but to an abstract construct that includes both the physical NIC and the OS-level IP protocol support associated with the NIC.

When a physical NIC is configured to act as a member of a bridge, any Layer-3 acceleration features may need to be switched off anyway: when acting as a part of a bridge, the NIC needs to receive all incoming packets, no matter the destination MAC or IP address, and the bridge code will decide if the packet will be forwarded, and to which member of the bridge. A basic bridge must not not care about IP addresses at all. Any Layer-3 functionality in a bridge goes above and beyond basic bridge functionality and is/should be optional in a virtual bridge.

If the physical NIC is configured with an IP address when configured as a part of a bridge, it will activate the ARP functionality on that NIC(+its driver). But outgoing ARP messages from that physical NIC won't be able to reach the VMs: to reach the entire segment with its ARPs (as proper Layer-2 functionality requires), the NIC driver would have to generate the ARP message as a both outgoing & incoming message simultaneously, and the NIC driver won't have the code to do that.

Having a virtual bridge with VMs means that some parts of the bridge's IP segment will be physically outside the host, while others are contained within the VMs located inside the host. If the host would use the NIC as usual in an attempt to communicate with one of the VMs, the packets would be needlessly sent out of the host, to the physical switch or router the host is connected to, and from there they would have to come back to the host, and through the bridge to the destination VM.

This would certainly be inefficient, and might not actually work at all: the physical switch to which the host with the virtual bridge is connected would normally have no reason to send any packets originating from that host back to the host itself.

Instead, the outgoing packets from the host to the bridged network segment must be sent through the bridge code, which first looks up which interface of the bridge (virtual or physical) would be "closest" to the destination. If the destination is known to the bridge, the outgoing packet is sent directly towards it. For the communication between the host and its VMs, this means the communication happens entirely within the physical host and does not use the physical network bandwidth outside the host at all.

If the destination MAC address is not known to the bridge, the outgoing packets are initially sent out all the bridge member interfaces: as soon as an answer is received, the bridge will learn the location of the destination of the initial packet and can go back to the efficient method of operation (as above).

When making ARP requests from the host containing the bridge, the requests must be broadcast both to the VMs and out the physical NIC so the requests will actually be sent to the whole network segment: the bridge code can do that, the individual physical NIC can't.

I think there is no requirement that a Linux bridge should be exclusively physical or virtual: I don't see why a Linux bridge could not have multiple physical interfaces and any number of VMs associated with it. But in an enterprise environment, you would not generally want to build a "do-everything host" like that. It could easily become a tricky, critical piece of infrastructure that cannot have any downtime ever; in other words, a headache for the system administrators.

- Why do VMs need to communicate with the bridge while physical machines don't actually communicate with a physical bridge directly?

Again the misconception: the VMs do not need an IP address on the bridge "to communicate with the bridge" as such.

But if you want the host and the VMs to be able to communicate with each other in the same IP network segment, assigning an IP address to the bridge device is the way to do it.

The IP address on the bridge device is there primarily to serve the communication needs of the host, not of the VMs - but it can allow the host to communicate with the VMs over IP efficiently without looping through external devices if that's what you want.