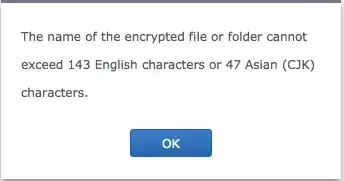

So some background first: I am attempting to convert a non-encrypted shared folder into an encrypted one on my Synology NAS and am seeing this error:

So I would like to locate the offending files so that I may rename them. I have come up with the following grep command: grep -rle '[^\ ]\{143,\}' * but it outputs all files with paths greater than 143 characters:

#recycle/Music/TO SORT/music/H/Hooligans----Heroes of Hifi/Metalcore Promotions - Heroes of Hifi - 03 Sly Like a Megan Fox.mp3

...

What I would like is for grep to split on / and then perform its search. Any idea on an efficient command to go about this (directory easily contains hundreds of thousands of files)?