CentOS 7 kernel release 3.10.0-957.el7.x86_64 drbd 9.0.16-1

Have one resource configured. drbd servicve is not enabled to start at boot time. I reboot the two nodes. One the first node I run systemctl start drbd and get

"DRBD's startup script waits for the peer node(s) to appear.

On second now when I run systemctl start drbd I get:

drbd data: failed to create debugfs dentry

EDIT

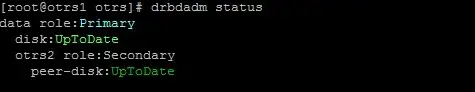

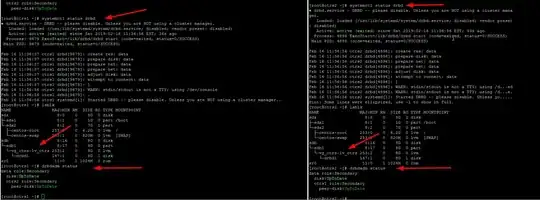

When I run drbdadm up resource_name on both nodes with drbd service disable and not started I get the two nodes in Secondary/UpToDate state which is good.

EDIT2

data.res

All resource are started on otrs1. It has the Primary role bot shows connecting for the remote node, and on the remote node I don't see the other node at all.

When I run pcs cluster stop --all and next run drbdadm up data on both nodes all looks good.

Now when I mount /dev/drbd1 /opt/otrs on one of the nodes it gets auto promoted to the primary role.

Now when I umount and bring the reource down on both nodes and re-run drbdadm status I get obviously No currently configured DRBD found.

Now, exactly the same happens when I run systemctl start drbd on both. On first node the output appears to hand but I guess it waits for the other node to start its services as well, right ?

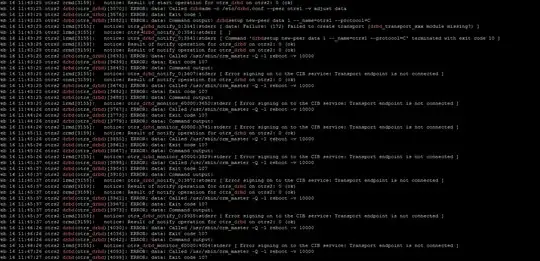

After a reboot, the cluster and resources start on node 1 but after putting it into standby mode, resources are not moved:

And here's what I see journalctl -xe

EDIT3

ok that's odd

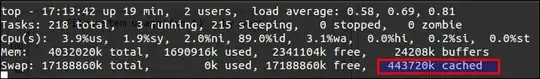

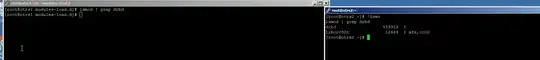

I was loading the drbd kernel module at boot via /etc/modules-load.d/drbd.conf on both nodes but disabled it. Rebooted and to my suprise on one node it's loaded but without drbd_transport_tcp, is pacemaker loading the drbd kernel_module? I can't imagine that.

Now when I systemctl disable pcsd; systemctl disable pacemaker; systemctl disable corosync on both node and reboot an do lsmod | grep drbd it returns no results. I don't get it :(