I want to delete a huge directory with over 6 million files across over 4000 directories, totaling about 26GB of data. As I knew this was going to take a while I used ionice to set the I/O priority to idle, so I can continue doing other tasks while the files are deleted.

time ionice -c3 rm -vrf /tmp/huge-folder

However my entire desktop environment is now very sluggish nonetheless, Google chrome takes ages to open new tabs and load pages, sometimes it even takes a while to open a new xterm window. In summary: I appear to get none of the benefits of reduced I/O priority on the rm process.

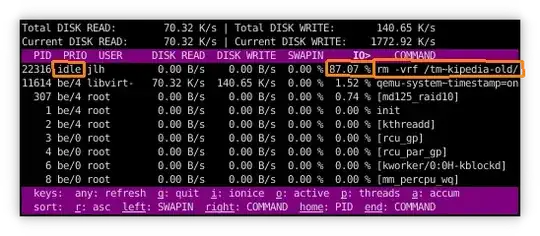

Inspecting the situation with iotop shows that some of the I/O time is spent in the rm process with the idle I/O priority that I want:

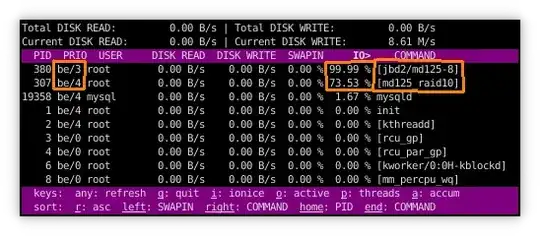

But then some other times the I/O time is spent in ext4's journaling process and the software raid process:

Note how they are using their own default I/O priority, which is probably the cause of the problem. jbd2 even runs at priority be/3 which is in fact higher priority than the default be/4 of all my other desktop processes.

Many other questions ask and answer what jbd2 is and why it's consuming I/O time, that's not what I'm wondering about. My question is: Is there a way to truly get the idle I/O scheduling priority in this specific scenario? Obviously, applying ionice to jbd2 is a crazy idea.

Further setup info: This is on a ext4 filesystem on top of a software raid10 with 3 rotating disks. ext4 is formatted and mounted using default options (Debian defaults, in case those differ).