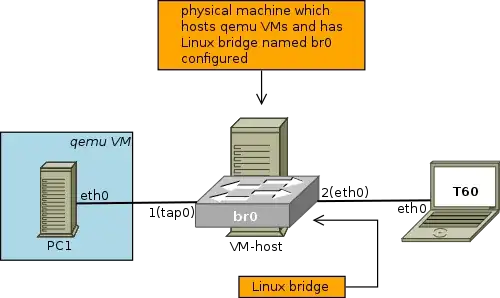

I made a following setup to compare a performance of virtio-pci and e1000 drivers:

I expected to see much higher throughput in case of virtio-pci compared to e1000, but they performed identically.

Test with virtio-pci(192.168.0.126 is configured to T60 and 192.168.0.129 is configured to PC1):

root@PC1:~# grep hype /proc/cpuinfo

flags : fpu de pse tsc msr pae mce cx8 apic sep mtrr pge mca cmov pse36 clflush mmx fxsr sse sse2 syscall nx lm rep_good nopl pni vmx cx16 x2apic hypervisor lahf_lm tpr_shadow vnmi flexpriority ept vpid

root@PC1:~# lspci -s 00:03.0 -v

00:03.0 Ethernet controller: Red Hat, Inc Virtio network device

Subsystem: Red Hat, Inc Device 0001

Physical Slot: 3

Flags: bus master, fast devsel, latency 0, IRQ 11

I/O ports at c000 [size=32]

Memory at febd1000 (32-bit, non-prefetchable) [size=4K]

Expansion ROM at feb80000 [disabled] [size=256K]

Capabilities: [40] MSI-X: Enable+ Count=3 Masked-

Kernel driver in use: virtio-pci

root@PC1:~# iperf -c 192.168.0.126 -d -t 30 -l 64

------------------------------------------------------------

Server listening on TCP port 5001

TCP window size: 85.3 KByte (default)

------------------------------------------------------------

------------------------------------------------------------

Client connecting to 192.168.0.126, TCP port 5001

TCP window size: 85.0 KByte (default)

------------------------------------------------------------

[ 3] local 192.168.0.129 port 41573 connected with 192.168.0.126 port 5001

[ 5] local 192.168.0.129 port 5001 connected with 192.168.0.126 port 44480

[ ID] Interval Transfer Bandwidth

[ 3] 0.0-30.0 sec 126 MBytes 35.4 Mbits/sec

[ 5] 0.0-30.0 sec 126 MBytes 35.1 Mbits/sec

root@PC1:~#

Test with e1000(192.168.0.126 is configured to T60 and 192.168.0.129 is configured to PC1):

root@PC1:~# grep hype /proc/cpuinfo

flags : fpu de pse tsc msr pae mce cx8 apic sep mtrr pge mca cmov pse36 clflush mmx fxsr sse sse2 syscall nx lm rep_good nopl pni vmx cx16 x2apic hypervisor lahf_lm tpr_shadow vnmi flexpriority ept vpid

root@PC1:~# lspci -s 00:03.0 -v

00:03.0 Ethernet controller: Intel Corporation 82540EM Gigabit Ethernet Controller (rev 03)

Subsystem: Red Hat, Inc QEMU Virtual Machine

Physical Slot: 3

Flags: bus master, fast devsel, latency 0, IRQ 11

Memory at febc0000 (32-bit, non-prefetchable) [size=128K]

I/O ports at c000 [size=64]

Expansion ROM at feb80000 [disabled] [size=256K]

Kernel driver in use: e1000

root@PC1:~# iperf -c 192.168.0.126 -d -t 30 -l 64

------------------------------------------------------------

Server listening on TCP port 5001

TCP window size: 85.3 KByte (default)

------------------------------------------------------------

------------------------------------------------------------

Client connecting to 192.168.0.126, TCP port 5001

TCP window size: 85.0 KByte (default)

------------------------------------------------------------

[ 3] local 192.168.0.129 port 42200 connected with 192.168.0.126 port 5001

[ 5] local 192.168.0.129 port 5001 connected with 192.168.0.126 port 44481

[ ID] Interval Transfer Bandwidth

[ 3] 0.0-30.0 sec 126 MBytes 35.1 Mbits/sec

[ 5] 0.0-30.0 sec 126 MBytes 35.1 Mbits/sec

root@PC1:~#

With large packets the bandwidth was ~900Mbps in case of both drivers.

When does the theoretical higher performance of virtio-pci comes into play? Why did I see equal performance with e1000 and virtio-pci?