On an mtd partition with 39 erase blocks (= 4.9 MiB), I tried to format a ubifs. The resulting file system has free space of 2.2M uncompressed data when reserved blocks are reduced to the minimum possible 1 block (I know that's not good). This means that only 45% of the space is usable for data.

The same area formatted with jffs2 allows me to write 4.6 MB of data which is 93% or more than double the size in a ubifs setup.

The problem is that I can't use jffs2 because my OOB size of 64 bytes doesn't provide enough space for both BCH8 and JFFS2 OBB data, as described in a TI warning.

I already read the FAQ chapters Why does my UBIFS volume have significantly lower capacity than my equivalent JFFS2 volume? and Why does df report too little free space? but I still can't believe that the overhead is so big.

Is there anything I can do to increase the free space of my (writable) ubifs volume?

Do I save space when I merge ubi0 and ubi1? (more than the reserved blocks?)

This is my setup:

$ mtdinfo -a

mtd10

Name: NAND.userdata

Type: nand

Eraseblock size: 131072 bytes, 128.0 KiB

Amount of eraseblocks: 39 (5111808 bytes, 4.9 MiB)

Minimum input/output unit size: 2048 bytes

Sub-page size: 512 bytes

OOB size: 64 bytes

Character device major/minor: 90:20

Bad blocks are allowed: true

Device is writable: true

$ ubinfo -a

ubi1

Volumes count: 1

Logical eraseblock size: 129024 bytes, 126.0 KiB

Total amount of logical eraseblocks: 39 (5031936 bytes, 4.8 MiB)

Amount of available logical eraseblocks: 0 (0 bytes)

Maximum count of volumes 128

Count of bad physical eraseblocks: 0

Count of reserved physical eraseblocks: 1

Current maximum erase counter value: 2

Minimum input/output unit size: 2048 bytes

Character device major/minor: 249:0

Present volumes: 0

Volume ID: 0 (on ubi1)

Type: dynamic

Alignment: 1

Size: 34 LEBs (4386816 bytes, 4.2 MiB)

State: OK

Name: userdata

Character device major/minor: 249:1

dmesg:

[ 1.340937] nand: device found, Manufacturer ID: 0x2c, Chip ID: 0xf1

[ 1.347903] nand: Micron MT29F1G08ABADAH4

[ 1.352108] nand: 128 MiB, SLC, erase size: 128 KiB, page size: 2048, OOB size: 64

[ 1.359782] nand: using OMAP_ECC_BCH8_CODE_HW ECC scheme

uname -a:

Linux 4.1.18-g543c284-dirty #3 PREEMPT Mon Jun 27 17:02:46 CEST 2016 armv7l GNU/Linux

Create & test ubifs:

# flash_erase /dev/mtd10 0 0

Erasing 128 Kibyte @ 4c0000 -- 100 % complete

# ubiformat /dev/mtd10 -s 512 -O 512

ubiformat: mtd10 (nand), size 5111808 bytes (4.9 MiB), 39 eraseblocks of 131072 bytes (128.0 KiB), min. I/O size 2048 bytes

libscan: scanning eraseblock 38 -- 100 % complete

ubiformat: 39 eraseblocks are supposedly empty

ubiformat: formatting eraseblock 38 -- 100 % complete

# ubiattach -d1 -m10 -b 1

UBI device number 1, total 39 LEBs (5031936 bytes, 4.8 MiB), available 34 LEBs (4386816 bytes, 4.2 MiB), LEB size 129024 bytes (126.0 KiB)

# ubimkvol /dev/ubi1 -N userdata -m

Set volume size to 4386816

Volume ID 0, size 34 LEBs (4386816 bytes, 4.2 MiB), LEB size 129024 bytes (126.0 KiB), dynamic, name "userdata", alignment 1

# mount -t ubifs ubi1:userdata /tmp/1

# df -h /tmp/1

Filesystem Size Used Avail Use% Mounted on

- 2.1M 20K 2.0M 2% /tmp/1

# dd if=/dev/urandom of=/tmp/1/bigfile bs=4096

dd: error writing '/tmp/1/bigfile': No space left on device

550+0 records in

549+0 records out

2248704 bytes (2.2 MB) copied, 1.66865 s, 1.3 MB/s

# ls -l /tmp/1/bigfile

-rw-r--r-- 1 root root 2248704 Jan 1 00:07 /tmp/1/bigfile

# sync

# df -h /tmp/1

Filesystem Size Used Avail Use% Mounted on

- 2.1M 2.1M 0 100% /tmp/1

Create & test jffs2:

# mkdir /tmp/empty.d

# mkfs.jffs2 -s 2048 -r /tmp/empty.d -o /tmp/empty.jffs2

# flash_erase /dev/mtd10 0 0

Erasing 128 Kibyte @ 4c0000 -- 100 % complete

# nandwrite /dev/mtd10 /tmp/empty.jffs2

Writing data to block 0 at offset 0x0

# mount -t jffs2 /dev/mtdblock10 /tmp/1

# df -h /tmp/1

Filesystem Size Used Avail Use% Mounted on

- 4.9M 384K 4.5M 8% /tmp/1

# dd if=/dev/urandom of=/tmp/1/bigfile bs=4096

dd: error writing '/tmp/1/bigfile': No space left on device

1129+0 records in

1128+0 records out

4620288 bytes (4.6 MB) copied, 4.54715 s, 1.0 MB/s

# ls -l /tmp/1/bigfile

-rw-r--r-- 1 root root 4620288 Jan 1 00:20 /tmp/1/bigfile

# sync

# df -h /tmp/1

Filesystem Size Used Avail Use% Mounted on

- 4.9M 4.9M 0 100% /tmp/1

Update:

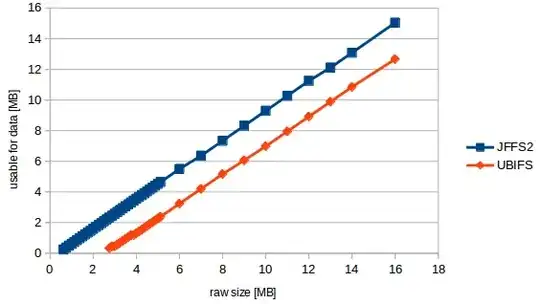

I did some mass measurements which resulted in the following chart:

So I can formulate my question more specific now:

The "formula" seems to be usable_size_mb = (raw_size_mb - 2.3831) * 0.89423077

In different words: no matter what size my mtd has, there are always 2.38 MB lost, no matter how big our volume is. This is the size of 19 erase blocks. The rest is a filesystem overhead of 10.6% of user data which is a high value but not unexpected for ubifs.

Btw. when doing the tests I got kernel warnings that at least 17 erase blocks are needed (=2.176 MB). But the smallest mtd which successfully ran through the test had 22 blocks (2.816 MB).